I have an OpenClaw agent set up for my son. Nothing fancy — a cron job sends him interesting facts and knowledge bites every morning through WhatsApp, with a few checkpoint messages in the evening. Think of it as a personal tutor that texts him things worth knowing, on a schedule.

It had been working great for weeks. Then today I picked up his phone and found the bot had been talking to itself. For pages. And pages. And pages.

What Happened

The setup uses WhatsApp's self-chat mode — the bot and my son share the same WhatsApp number, with the bot running through WhatsApp Web via Baileys. The user sends messages from the phone app, the bot picks them up and responds through the Web protocol. Clean separation.

Except at some point after a recent OpenClaw update, the separation broke. The bot would send a message, WhatsApp would echo that message back to the same number, and the bot would interpret its own reply as a brand new message from the user. So it would respond. Which would echo back. Which it would respond to again.

Bot sends knowledge bite

→ WhatsApp echoes it back as inbound message (~100ms later)

→ Bot thinks it's a new user message, generates reply

→ WhatsApp echoes that back too

→ ...repeat until someone notices or the tokens run outNobody noticed for hours. By the time I checked, the conversation log looked like a chatbot having a very enthusiastic debate with itself about marine biology. A week's worth of API tokens — gone. Burned in a single afternoon of the bot passionately agreeing with its own points.

Why It Happened

This is a self-chat specific problem, and it's subtle. In a normal DM between two different numbers, telling apart "user message" and "bot reply" is trivial — they come from different phone numbers. In self-chat mode, both come from the same number. Both have fromMe=true. At the protocol level, they're identical.

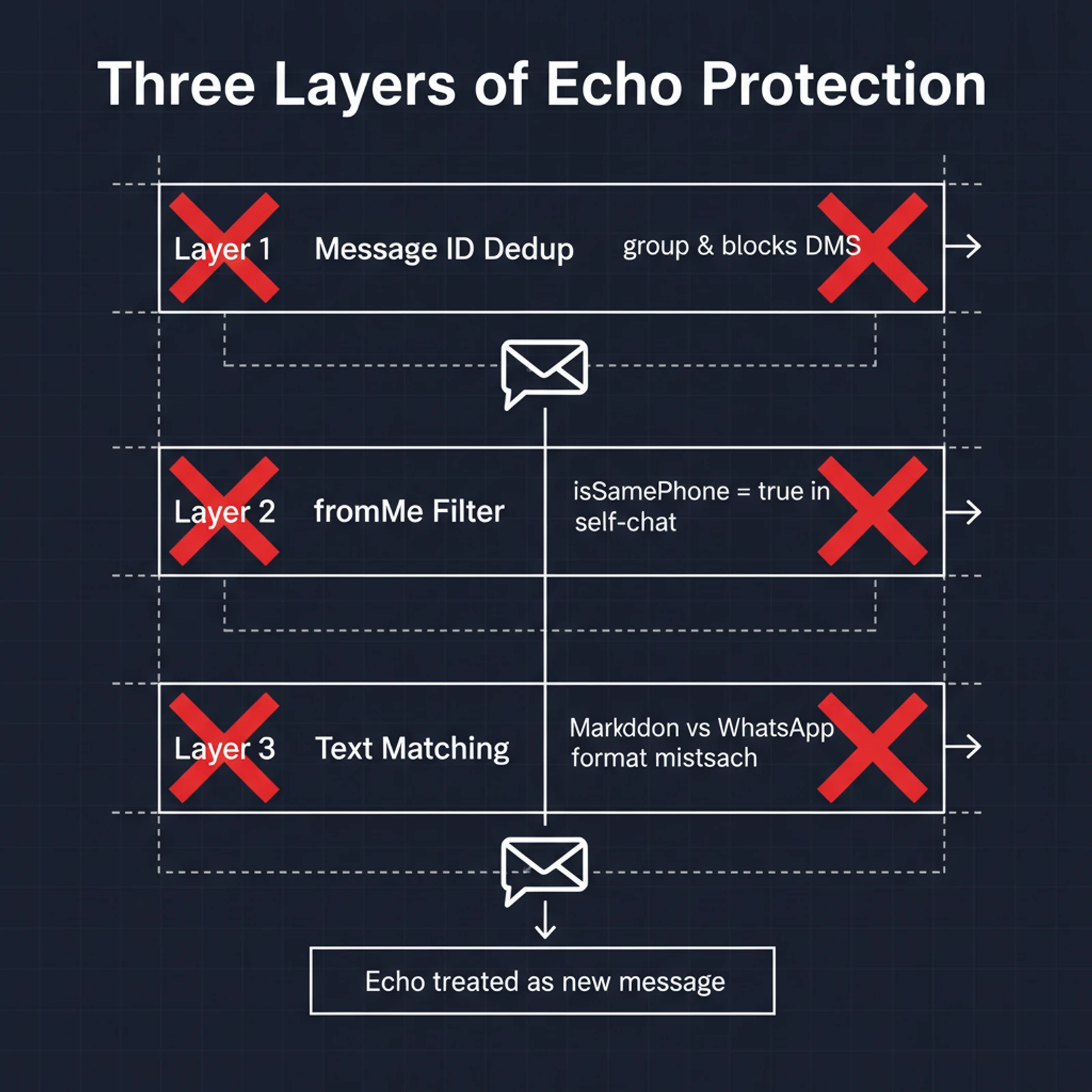

OpenClaw has three layers of protection against this exact scenario. All three failed simultaneously.

Layer 1: Message ID deduplication. When the bot sends a message, it records the message ID. When the same message echoes back, it checks the ID against the cache and skips it. Smart, reliable, should work perfectly. One problem: the code has a group && condition at the front. This check only runs for group chats. DMs — including self-chat — are excluded entirely.

The irony is painful. The most reliable defense mechanism was already built, already working, and gated behind a condition that specifically excluded the one scenario where it was most needed.

Layer 2: fromMe access control. If a message is from yourself and the sender is a different number, skip it. But in self-chat, sender and receiver are the same number, so this check intentionally lets everything through. It has to — otherwise the user's own messages would get filtered too.

Layer 3: Echo tracker text matching. Before sending, the bot stores the reply text. When an inbound message arrives with matching text, it's flagged as an echo. Except the stored text is raw Markdown (**bold**), while WhatsApp converts it to its own format (*bold*) before echoing it back. The strings don't match. Echo goes undetected.

Stored: "This is **important** stuff"

Echoed: "This is *important* stuff"

Match: false — not recognized as echoThree layers. Three different failure modes. All three hit at once.

The Fix

Layer 1 was the right answer all along. Message ID deduplication doesn't care about text formatting — it compares unique identifiers, not content. The only change needed was removing the group && gate so it applies to all chat types, not just group chats.

- if (group && Boolean(msg.key?.fromMe) && id && isRecentOutboundMessage({

+ if (Boolean(msg.key?.fromMe) && id && isRecentOutboundMessage({One condition removed. That's it. The bot's outbound messages get tracked by ID regardless of chat type; when they echo back, the ID matches and they're silently dropped. User messages still go through because they're sent from the phone app — their IDs never enter the outbound cache.

Since this patches a compiled npm package that gets overwritten on every update, we wrapped it in an idempotent shell script hooked into the auto-updater. If OpenClaw fixes this upstream, the script detects the change and skips itself.

A Known Bug, Apparently

After fixing it locally, I checked GitHub. Sure enough — multiple open issues describing exactly this behavior, all reported within the past week. The regression was introduced somewhere between v2026.3.13 and v2026.3.24. My instance auto-updated and walked right into it.

The community's suggested fix matches ours: track outbound message IDs and filter echoes by ID, not by text content. Which is what the code already did — just not for DMs.

The Bigger Picture

This bug cost me a week of API tokens and some mild embarrassment. It's not a disaster. But it made me sit with a question I've been circling for a while: what is OpenClaw actually for?

I set it up originally to test it — I even wrote a security analysis back in February. Then I tried to find a real use case. The agent-for-my-son thing was genuinely useful and kind of fun. But beyond that? I keep coming up short.

The internet is full of people showing off OpenClaw setups that trade stocks, manage portfolios, run their smart homes. I've looked at a lot of these. Most of them make me uneasy — not because the technology can't do it, but because the reliability isn't there. You're giving an LLM real-world authority through a patchwork of community-contributed skills that have no unified quality standard, no formal review process, and no guarantees about behavior across model versions.

I have my own setup — a Web Claude Code Pilot interface I built myself. It handles my daily work and personal tasks. It's stable, I understand every piece of it, and I trust it because I control the full stack. When something breaks, I know where to look.

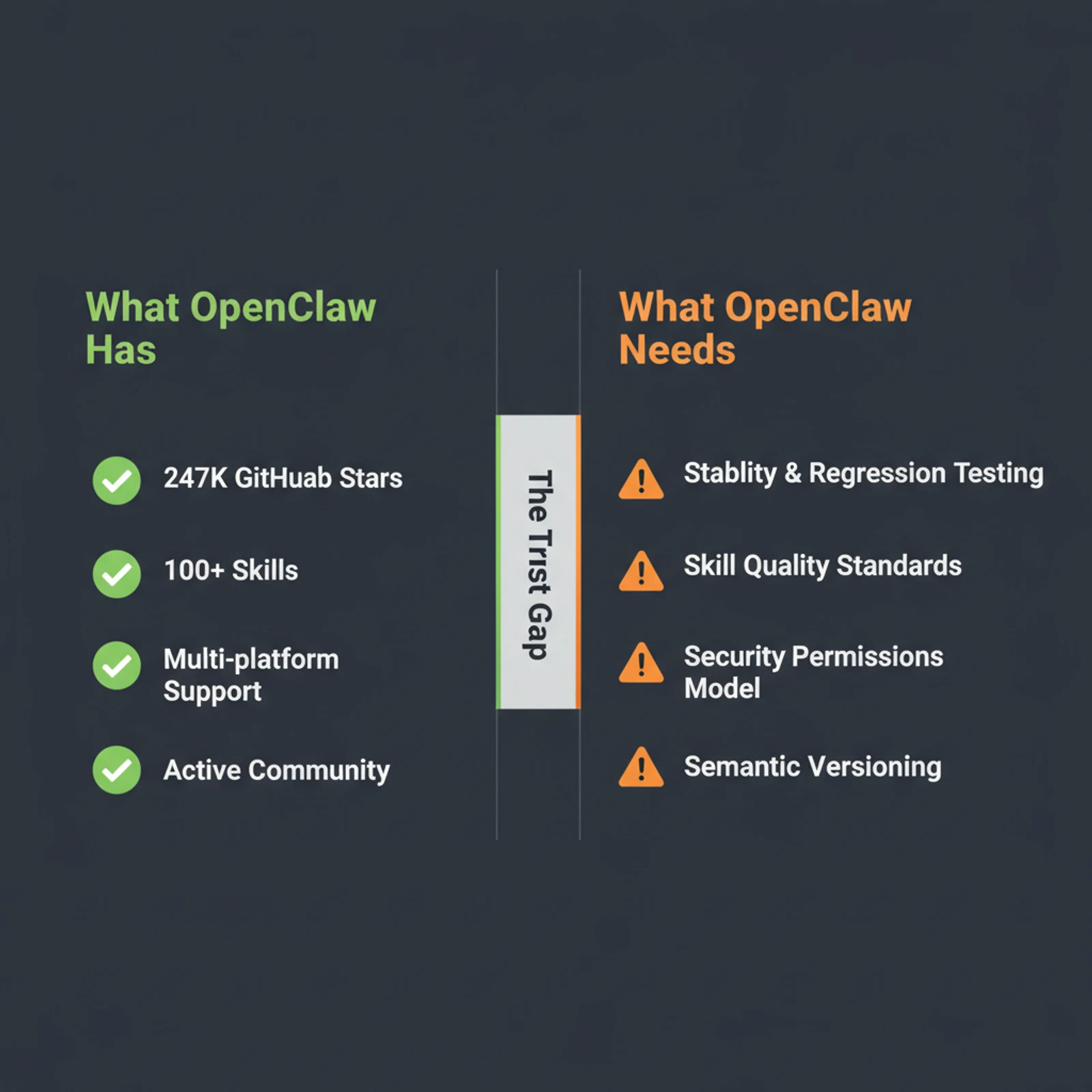

With OpenClaw, I'm patching compiled JavaScript in node_modules because a version update broke echo detection in self-chat. That's not a mature platform. That's a hobby project with 247K GitHub stars.

What's Actually Missing

The hype around OpenClaw is real. The downloads are real. But I think a lot of people install it, play with it for a day, and then aren't sure what to do with it. The missing piece isn't features — it's trust.

Stability. Auto-updates that introduce regression bugs in core messaging functionality aren't acceptable for something people rely on daily. The version I was running worked perfectly until it didn't, and the failure mode was silent token hemorrhage.

Skill quality. The community skills ecosystem is a mess. There's no curation, no compatibility matrix, no testing requirements. Anyone can publish a skill that does anything. Some are excellent. Some are dangerous. You can't tell which is which without reading the code.

Scope clarity. OpenClaw tries to be everything — personal assistant, home automation hub, messaging platform, social network participant. Each of those has real security implications. The permissions model hasn't caught up with the ambition.

For OpenClaw to grow beyond a power-user toy into something genuinely dependable, it needs the boring stuff: standardized skill review, semantic versioning with actual compatibility promises, and an update process that doesn't silently break working configurations.

None of that is glamorous. All of it is necessary.

Wrapping Up

My son's AI tutor is working again. The echo loop is patched, the cron jobs are running, and this morning he got a message about how octopuses have three hearts. No infinite self-conversation this time.

The whole episode burned through more tokens than I'd like to admit, but it was genuinely instructive. Not every tool needs to be enterprise-grade to be useful — but you should know exactly where the edges are. With OpenClaw, the edges are closer than the star count suggests.

I'll keep using it for the tutor setup. It's a good use case: low stakes, bounded scope, easy to monitor. For anything beyond that, I'm sticking with tools I built myself. Not because I'm against open source — but because when your AI agent decides to have a spirited debate with itself at 3 AM, you want to be the one who understands why.